What Is an AI Agent Sandbox?

This blog is a part of our “WTF is...?” series that cuts through the noise to explain complex and trending topics in plain English. We break down the jargon, skip the fluff, and give you the essential knowledge you need to understand what’s what. Whether it's emerging technology, industry buzzwords, or confusing concepts, we strive to make it accessible.

Everyone is talking about AI agents right now. And the hype is justified. Many are even making bold claims “Software development will never be the same again.”

AI agents write code, execute it, pull in dependencies, scrape the web, touch internal systems, and make decisions you didn’t explicitly approve. They run fast. They change behavior constantly. And they do all of this on shared infrastructure that was never designed for non-deterministic, autonomous code execution.

As a result of this hype, we’ve seen a BOOM in AI sandbox tools. We’re here to help you decide which sandbox is right for you.

What Is an AI Agent Sandbox? (In Plain English)

An AI agent sandbox is an isolation boundary designed to limit the blast radius when an AI agent executes untrusted or unexpected code.

That definition matters, because most conversations stop one step too early.

An AI agent sandbox is not just about preventing bad behavior. It’s about containing failure when prevention inevitably breaks down.

AI agents are different from traditional services because:

- Their behavior is non-deterministic

- They generate and execute new code at runtime

- They interact with unbounded external systems

- They increasingly operate with real credentials and permissions

If you assume perfect behavior, you’re already in trouble.

Why Traditional Security Models Break for AI Agents

Most security systems rely on a quiet assumption: You can define what “allowed behavior” looks like.

That assumption holds for deterministic services.

A web server has a known behavior space. You can restrict ports, syscalls, filesystem access, credentials, and network paths with reasonable confidence.

AI agents don’t work that way.

An agent that searches the web, reads GitHub repos, generates code, and executes it in a single session has an unbounded behavior space. A legitimate HTTP request and a cleverly disguised data exfiltration attempt are structurally identical. You cannot reliably write policy to distinguish the two.

This is why least privilege breaks for agents. Not because least privilege is wrong, but because “least” cannot be defined.

When behavior is unbounded, policy always has gaps.

So the real question becomes: what happens when those gaps get hit?

The Different Types of AI Agent Sandboxes (And Their Limits)

Not all “sandboxes” are the same. The industry is shipping many of them right now. Some are useful. Most are incomplete.

Here’s how the layers actually break down.

Process-Level Sandboxes (OS Controls)

Examples include:

- seccomp

- Landlock

- Seatbelt

- syscall filtering and capability restriction

These tools constrain known behaviors at the operating system level. They are fast, familiar, and absolutely worth using.

But they all share the same fatal assumption: the kernel is trusted.

If a kernel vulnerability exists—and history says it will—this boundary collapses instantly. A kernel exploit doesn’t just break the sandbox. It breaks everything on the host.

Process sandboxes are guardrails, not isolation.

Container-Based Sandboxes

Containers add filesystem isolation, namespaces, and resource controls. They are a step forward—but the trust boundary is still the shared kernel.

That means:

- A single kernel exploit can compromise every container on a node

- Container escapes are real, frequent, and well-documented

- The blast radius is the node, not the workload

For AI agents executing untrusted code, that’s an unacceptable failure model.

Containers were built to run trusted workloads efficiently.

They were never designed to be a hard security boundary.

VM-Level / Kernel-Isolated Sandboxes

This is a different architecture entirely.

With VM-level isolation:

- Each workload gets its own kernel

- There is no shared kernel state

- A compromise in one workload cannot traverse to its neighbors

This model assumes something important: failure is expected.

Instead of trying to prevent every exploit, it ensures that when one happens, the damage is contained by design. A kernel CVE compromises one workload, not the node. A rogue agent can’t laterally move. A popped pod doesn’t force a full cluster teardown.

This isn’t “stronger sandboxing.” It’s post-fail isolation.

Why Policy-First Sandboxing Fails for AI Agents

Most AI agent security today focuses on pre-fail controls:

- Egress filtering

- Credential scoping

- Capability boundaries

- Drift detection

- Runtime policy engines

All of these are good practices. You should use them.

But they all share the same goal: prevent compromise.

AI agents make that goal unrealistic. Their capabilities increasingly resemble those of well-resourced adversaries—and those capabilities are now accessible to nearly anyone.

The question nobody wants to ask is the most important one:

What happens when prevention fails? If the answer is “tear down the cluster,” you don’t have a sandbox. You have a liability.

What Makes an AI Agent Sandbox Production-Grade?

A production-grade AI agent sandbox isn’t a demo or a weekend project. It has to survive real incidents at fleet scale.

That means:

- Dedicated isolation boundaries (kernel-level, not policy-level)

- Full observability per workload, not aggregated noise

- Predictable performance, not “secure but slow”

- Kubernetes-native operations

- No image rebuilds

- No special-case debugging

- No fragile runtime disguises

If your sandbox breaks observability, complicates operations, or only works on a single node, it won’t survive production.

How Edera Approaches AI Agent Sandboxing

Edera doesn’t treat sandboxing as a tool or a bolt-on feature. We treat it as infrastructure.

Each workload runs in an Edera Zone: an isolated environment with its own kernel. There is no shared kernel state between workloads. Isolation is explicit, not implied.

That changes everything:

- A compromised AI agent is contained by geometry, not policy

- Observability improves because telemetry is per-workload

- Incident response becomes simple: analyze logs, delete the pod

- Other workloads keep running because they never shared fate

This architecture is fast. In our memory and syscalls benchmarks, isolated zones match or outperform Docker containers for AI workloads. Security and performance aren’t opposites when the system is designed for both.

Most importantly, this model assumes reality: something will break. And when it does, the damage stops where it should.

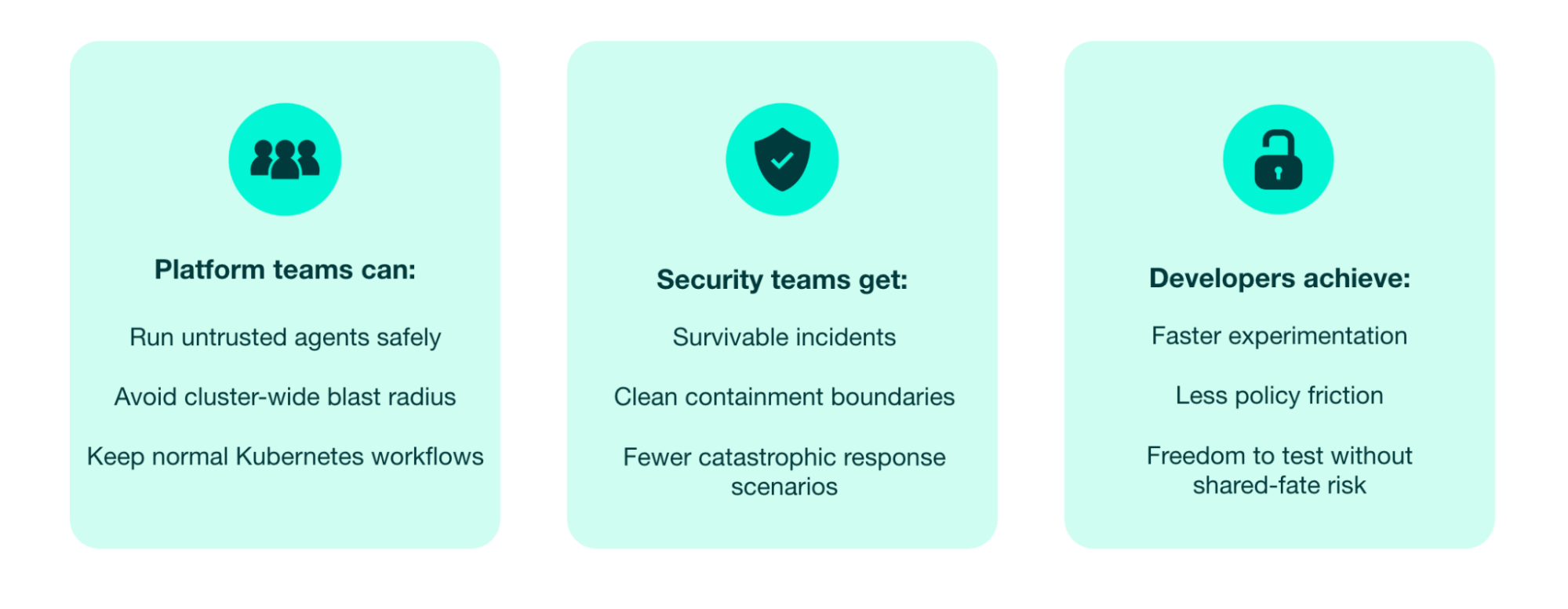

What Strong Isolation Unlocks for AI and Kubernetes

When isolation is built into the infrastructure, security stops being the bottleneck.

This is the same philosophy SRE has used for years: design for failure, not perfection. It’s time security caught up.

The Real Takeaway

AI agent sandboxes aren’t about locking agents down until they’re useless.

They’re about accepting that non-deterministic systems will fail, and making sure that failure doesn’t matter.

Tools try to restrict behavior. Architectures contain failure. Edera builds the post-fail layer.

FAQ

What is an AI agent sandbox in simple terms?

An AI agent sandbox is an isolation boundary that limits the impact of an AI agent when it executes untrusted or unexpected code.

Why aren’t containers enough for AI agents?

Containers share a kernel. A single kernel exploit can compromise every workload on a node, making the blast radius far too large for autonomous agents.

What’s the difference between sandboxing and isolation?

Sandboxing often relies on policies and restrictions. Isolation uses architectural boundaries—like separate kernels—to contain failure even when policies break.

Do AI agent sandboxes hurt performance?

Not inherently. With the right architecture, strong isolation can match or exceed container performance while improving observability.

Is Edera a sandbox or a runtime?

Edera is a hardened runtime that provides production-grade isolation. It’s not a policy layer—it’s an architectural boundary.

Read the full “WTF is...?” series.

-3.avif)