I Looked at the GPU Stack at KubeCon and Now I Can't Sleep

I just got back from KubeCon EU in Amsterdam. 15,000 attendees (Kubecon’s biggest ever!) and every other conversation was about AI agents, GPU clusters, and inference at scale. The energy is infectious, everyone's building and shipping, everyone's talking about what model they're running on what hardware.

Nobody was talking about the security boundary.

At the conference, I had a stroll with our co-founder through the hallway and asked a simple question: "What does the full stack of a GPU on Kubernetes actually look like? Where’s the threat model?" The answer sent me down a rabbit hole. I want to share what I found, because I think the industry needs to have this conversation before something very bad happens.

The GPU-on-Kubernetes Stack, Layer by Layer

I'm going to walk through the GPU-on-Kubernetes stack from the bottom up. We’re about to go deep, so grab some popcorn or your favorite snack. Let’s get started.

Layer 1: The Hardware

At the bottom, you have the GPU silicon (NVIDIA H100, A100, etc.) sitting on a PCIe bus connected to the host CPU. On newer GPUs like the H100, NVIDIA runs a GPU System Processor (GSP), a dedicated ARM core with its own firmware that manages the GPU at a level below the driver. This firmware is signed by NVIDIA, closed-source, and opaque. You trust it because NVIDIA tells you to trust it.

For multi-GPU training, there's NVLink and NVSwitch for direct GPU-to-GPU communication. For multi-node training, InfiniBand or RoCE NICs handle the network traffic. And here's where it gets interesting: GPUDirect RDMA lets the GPU DMA directly to the network card, bypassing the CPU and operating system entirely.

When I hear "bypasses the CPU and OS," I think no virtual memory protections in the path. No kernel-level security controls, no firewalls, no network policy, no eBPF visibility. The GPU is effectively bypassing the computer to talk directly to the network. There is an IOMMU restricting DMA to registered memory regions, so it's not completely lawless, but the OS is fully out of the loop for the data plane. If a GPU context is compromised, the IOMMU prevents it from DMAing to arbitrary host memory, but it doesn't prevent reading or writing RDMA buffers of other tenants sharing the same GPU.

The IOMMU protects the host but it doesn't protect tenants from each other.

Layer 2: The Kernel Drivers

This is where my existential dread started kicking in (or maybe it was just jetlag).

The NVIDIA GPU driver stack consists of four kernel modules loaded into the host Linux kernel:

- nvidia.ko handles GPU memory management, context scheduling, and command submission. This is the primary ioctl interface between userspace and the GPU.

- nvidia-uvm.ko Unified Virtual Memory. It handles automatic page migration between CPU and GPU memory.

- nvidia-modeset.ko handles display management (less relevant for headless compute, but still loaded)

- nvidia-peermem.ko GPUDirect RDMA peer memory. Lets InfiniBand DMA directly to GPU VRAM.

All four are proprietary, closed-source and run in ring 0, the highest privilege level of the CPU. A bug in any of these modules means an attacker has full kernel memory access on the host.

Most insane: these modules are resident on the host kernel for every node in the cluster, even nodes that never schedule a GPU workload. Four proprietary kernel modules expanding your host's attack surface for every node, not just the GPU nodes.

Wiz found critical vulnerabilities in the NVIDIA Container Toolkit (CVE-2024-0132, CVE-2024-0133) that allowed container escape from a crafted container image. You pull a malicious image and it’s game over. NVIDIA publishes driver CVEs regularly (CVE-2024-0090, CVE-2024-0091, etc.). This is the track record of the code running in ring 0 on every GPU node in your cluster.

The whole stack is proprietary. Closed-source code in ring0 processing complex commands from untrusted containers. As a security engineer, this is not making me feel warm and fuzzy about our AI-driven future.

Layer 3: The ioctl Interface - Undocumented and Unauditable

When a container process calls any CUDA function, such as allocating GPU memory, launching a kernel, or copying data, it ultimately translates to:

fd = open("/dev/nvidia0", O_RDWR);

ioctl(fd, NVIDIA_IOCTL_COMMAND, &args);That ioctl crosses from container userspace into the host kernel's nvidia.ko. The driver processes the request in kernel context with full ring 0 privileges.

There are hundreds of ioctl commands. The interface is undocumented, unstable between driver versions, and not covered by any open standard. NVIDIA's recent open-source kernel module initiative has started publishing some ioctl structures, but the firmware and command submission paths remain proprietary. The open-source nouveau driver has reverse-engineered parts of it, but the modern compute paths (CUDA, the stuff that matters for AI) are not covered.

Is this interface memory-safe? No. It's C code in the kernel processing complex structures from untrusted userspace. Is it type-safe? No. Is there a specification an auditor could review? No.

This is the security boundary between an untrusted container and the GPU hardware, and it consists of hundreds of undocumented C functions processing arbitrary user input in ring 0. This is the state of the art in AI infrastructure.

Layer 4: The NVIDIA Container Toolkit and the Illusion of Isolation

When a GPU pod starts on Kubernetes, the NVIDIA container runtime hook does the following:

- Mounts

/dev/nvidia0,/dev/nvidiactl,/dev/nvidia-uvm, and/dev/nvidia-uvm-toolsdirectly into the container. - Bind-mounts the host's NVIDIA driver libraries (

libcuda.so,libnvidia-ml.so, etc.) from the host filesystem into the container. - Sets cgroup device rules to permit access to the GPU device nodes.

No seriously, read that again. The container gets direct device file access to the GPU driver in the host kernel. The host's proprietary driver binaries are mounted into the container. There’s a party at ring0 and everyone is invited. The "isolation" between the container and the GPU hardware is: cgroup device permissions and Linux namespace boundaries.

Have you seen the size of NVIDIA's drivers? They're measured in gigabytes. They're written for performance, not security. NVIDIA is not a security-first company, they're a performance-first company, and every GPU container on your cluster has a direct channel into their proprietary kernel code.

The container boundary for GPU workloads is a used Kleenex.

Layer 5: Kubernetes Device Plugin

The NVIDIA GPU device plugin is the sanest layer in this stack. It advertises GPUs as schedulable resources (nvidia.com/gpu: 1), handles allocation, and supports Multi-Instance GPU (MIG) for hardware-level partitioning on A100/H100.

MIG is actually meaningful for security, it creates hardware-isolated GPU partitions with separate memory controllers. But it limits flexibility (fixed partition sizes, reduced GPU profiles) and most deployments don't use it, especially deployments that need to fit a model into VRAM.

Without MIG, GPU "sharing" is time-slicing: multiple pods take turns on the same GPU with no hardware memory isolation between them. One tenant's model weights and another tenant's inference data coexist in the same VRAM with no boundary.

Layer 6: The Application Stack

At the top: CUDA runtime, cuDNN, cuBLAS, NCCL for multi-GPU communication, and then PyTorch, TensorFlow, vLLM, or whatever inference framework is serving the model. Weights are loaded into GPU VRAM and prompts and responses flow through GPU memory.

We are a long way from the promise of running a container in a minimal, isolated environment with well-understood operating system primitives. This stack is massive, proprietary, and the security model at every layer is "trust NVIDIA."

The Threat Model Nobody's Discussing

If you're a GPU cloud provider (CoreWeave, Together AI, Lambda, or running your own GPU cluster), here's what you're trying to protect:

- Tenant isolation: customer A must not be able to read customer B's GPU memory, model weights, or inference data.

- Host integrity: a compromised container must not escape to the host and pivot to other tenants or infrastructure.

- Model weights: proprietary weights worth millions in training compute sitting in VRAM.

- Inference data: customer prompts containing PII, credentials, trade secrets.

- Infrastructure credentials: Kubernetes service accounts, cloud IAM roles, storage keys.

Here are the threats that will curse my sleep schedule until we get this resolved:

NVIDIA driver ioctl exploitation

Every GPU container ioctls directly into proprietary, closed-source kernel code. Hundreds of undocumented commands, C code processing untrusted input in ring 0. A vulnerability here gives you kernel code execution on the host, access to every container on the node, and access to every GPU's VRAM (every tenant's data). NVIDIA publishes driver CVEs regularly. This is the single biggest risk in the stack and there is no mitigation in standard Kubernetes.

GPU memory snooping

GPU VRAM is not guaranteed to be zeroed between allocations. Without MIG, multiple tenants share the same GPU memory space with no hardware isolation. One tenant's inference data or model weights could leak to the next tenant through uncleared memory. Side-channel attacks on GPU caches and shared schedulers are published academic research, not just speculation.

GPUDirect RDMA data plane bypass

Training traffic between GPUs flows over RDMA without touching the OS. The kernel's firewalls, network policies, and eBPF monitoring tools never see it. A compromised GPU context could potentially read or inject training data on the wire.

Container escape via NVIDIA Container Toolkit

The Wiz vulnerabilities (CVE-2024-0132) demonstrated that a malicious container image could exploit the NVIDIA container runtime's device setup to escape to the host. This vulnerability class exists because the toolkit bind-mounts host driver binaries into the container, a design that creates a direct host-to-container trust bridge.

How Edera Isolates the NVIDIA Driver from the Host Kernel

When I brought this stack teardown to our co-founder, the question turned into: what if the NVIDIA driver wasn't a trusted component in the host kernel? What if it was an untrusted component in a sandbox?

This is the architecture we built.

The Standard Model (Every Kubernetes Cluster Today)

Container userspace

↓ ioctl(/dev/nvidia0)

Host kernel (nvidia.ko) ← proprietary ring-0 code

↓

GPU hardware

A driver exploit compromises the host kernel. Every pod on the node is owned, every GPU on the node is exposed. Game over.

The Edera Model (Driver Isolation)

Container zone userspace

↓ ioctl (paravirtual GPU device)

Container zone kernel (clean, no NVIDIA modules)

↓ proxied across zone boundary

Driver zone kernel (nvidia.ko runs here, isolated)

↓

GPU hardware

The container zone never touches the real NVIDIA driver. It sees a paravirtualized GPU device - a clean, controlled interface. Its GPU requests are forwarded through the zone boundary to a separate, isolated driver zone that loads nvidia.ko and talks to the hardware.

The host kernel never loads nvidia.ko. The proprietary driver code runs in an untrusted zone, not in your host's ring 0. This is the same architectural pattern as Xen driver domains, a pattern that's been proven in production for over a decade.

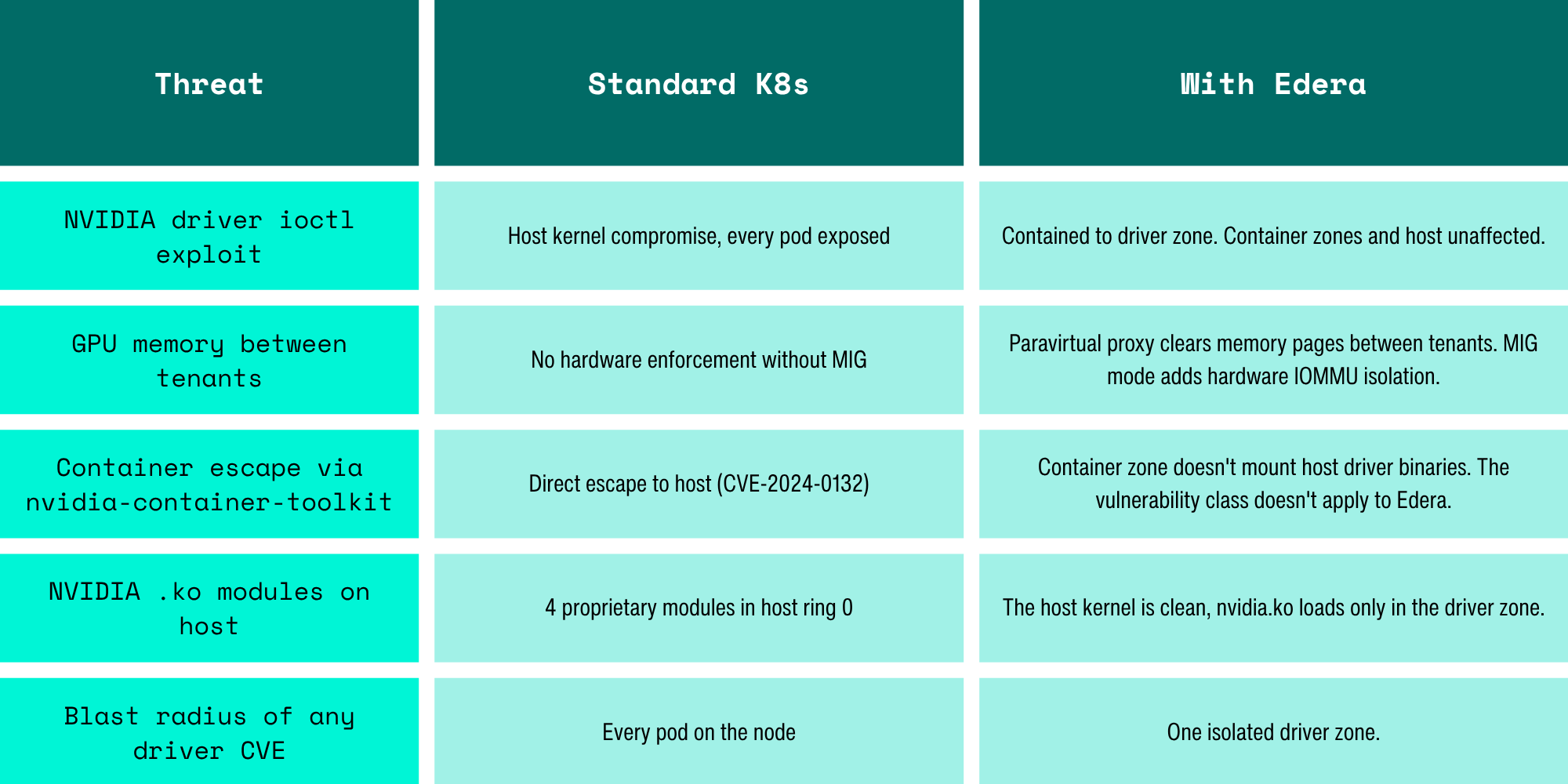

What Changes With Driver Zone Isolation

Let’s Be Honest: What This Architecture Does Not Solve

This doesn't solve everything.

GPU firmware is still a black box. The GSP firmware on H100+ runs below our isolation boundary. A firmware-level compromise is below what any hypervisor can contain. This is an industry problem, not an Edera problem.

GPUDirect RDMA data plane. GPU-to-NIC DMA for RDMA traffic doesn't flow through the zone boundary. Our paravirtualized IOMMU restricts what memory regions the GPU can access, which limits blast radius, but we're not in the data path for RDMA.

GPU silicon side channels. Cache timing, scheduler contention, power analysis within the GPU itself - these are hardware-level problems that require hardware-level solutions. No software isolation can fix them.

A compromised driver zone can read its own tenant's data. The isolation protects other tenants and the host, but the tenant whose driver zone is compromised has their data exposed within that zone. The blast radius is bounded, not eliminated.

The Conversation We Need to Have

The GPU stack on Kubernetes is the largest unexamined attack surface in cloud-native infrastructure. Four proprietary kernel modules, an undocumented ioctl interface with hundreds of commands, direct device passthrough from untrusted containers, and a security model that amounts to "trust NVIDIA" at every layer.

The industry is deploying this at scale for multi-tenant AI inference, processing sensitive customer data through GPU memory, with container boundaries that were never designed for this threat model.

We built Edera because we believe the answer to "how do you secure untrusted workloads?" is structural isolation, not policy. For GPU workloads, that means treating the NVIDIA driver as what it is: a massive, proprietary, historically-vulnerable piece of code that should not run in your host kernel's ring 0. It should run in a sandbox. And every tenant's GPU requests should cross an isolation boundary, not have direct access to a shared driver.

If you're running multi-tenant GPU workloads and the security of your tenants' data matters, you should be asking yourself how you’re going to solve these problems.

-3.avif)