Securing Agentic AI Systems with Hardened Runtime Isolation

Agentic AI systems are rapidly transforming enterprise business processes. These systems can ingest and analyze data at machine scale, identify intricate patterns, and coordinate responses across diverse tools and data sources simultaneously. From customer support to research to data analysis, large enterprises are starting to see the benefits of augmenting their workforces and magnifying the impact of their employees.

Because of the value they provide, agents are being deployed both internally and exposed to customers directly. Customer facing functions and actions are just as impactful but as these agents consume customer data at scale it’s vital to ensure that the multi-tenancy of AI agents does not come at the expense of data privacy and security.

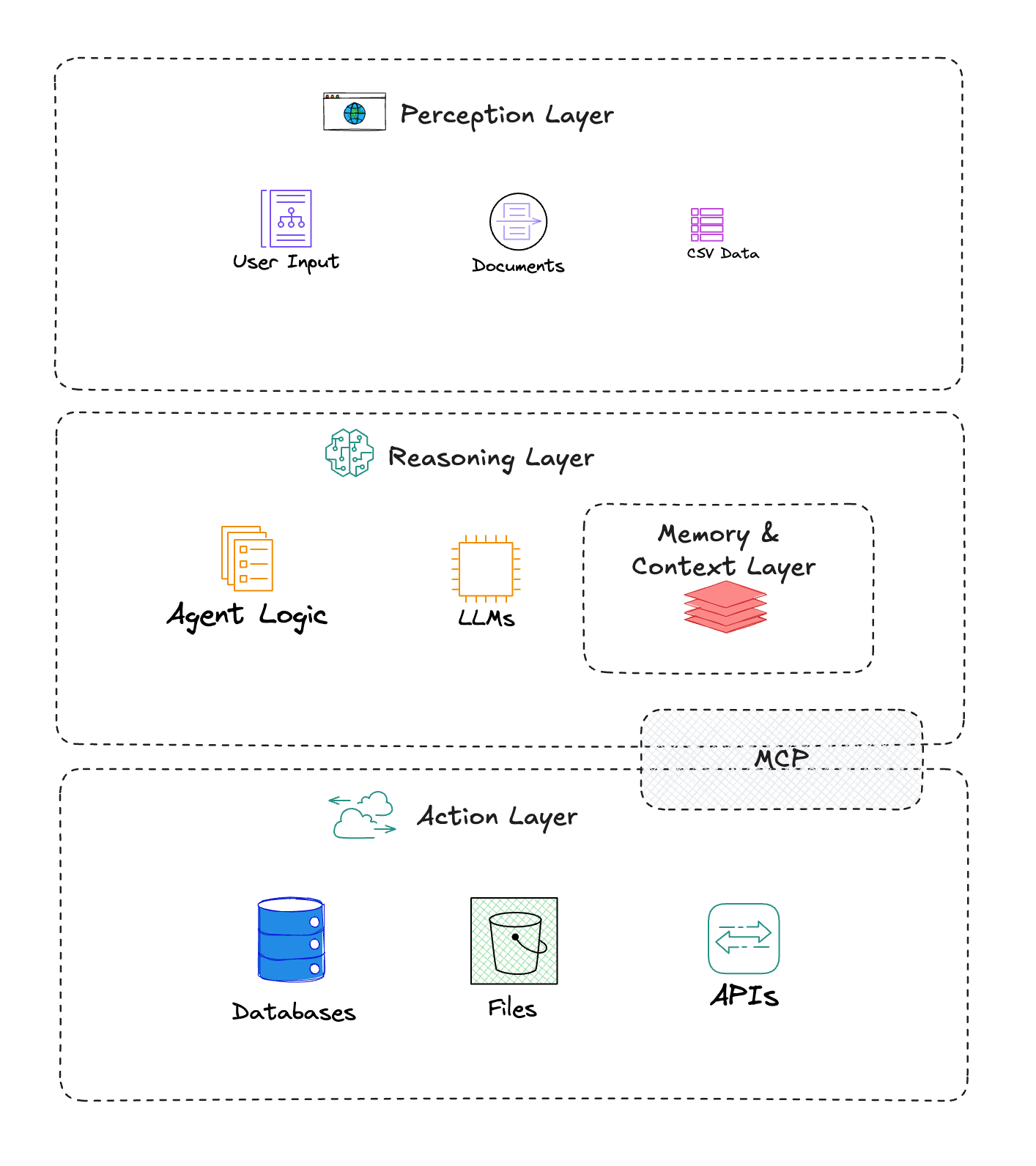

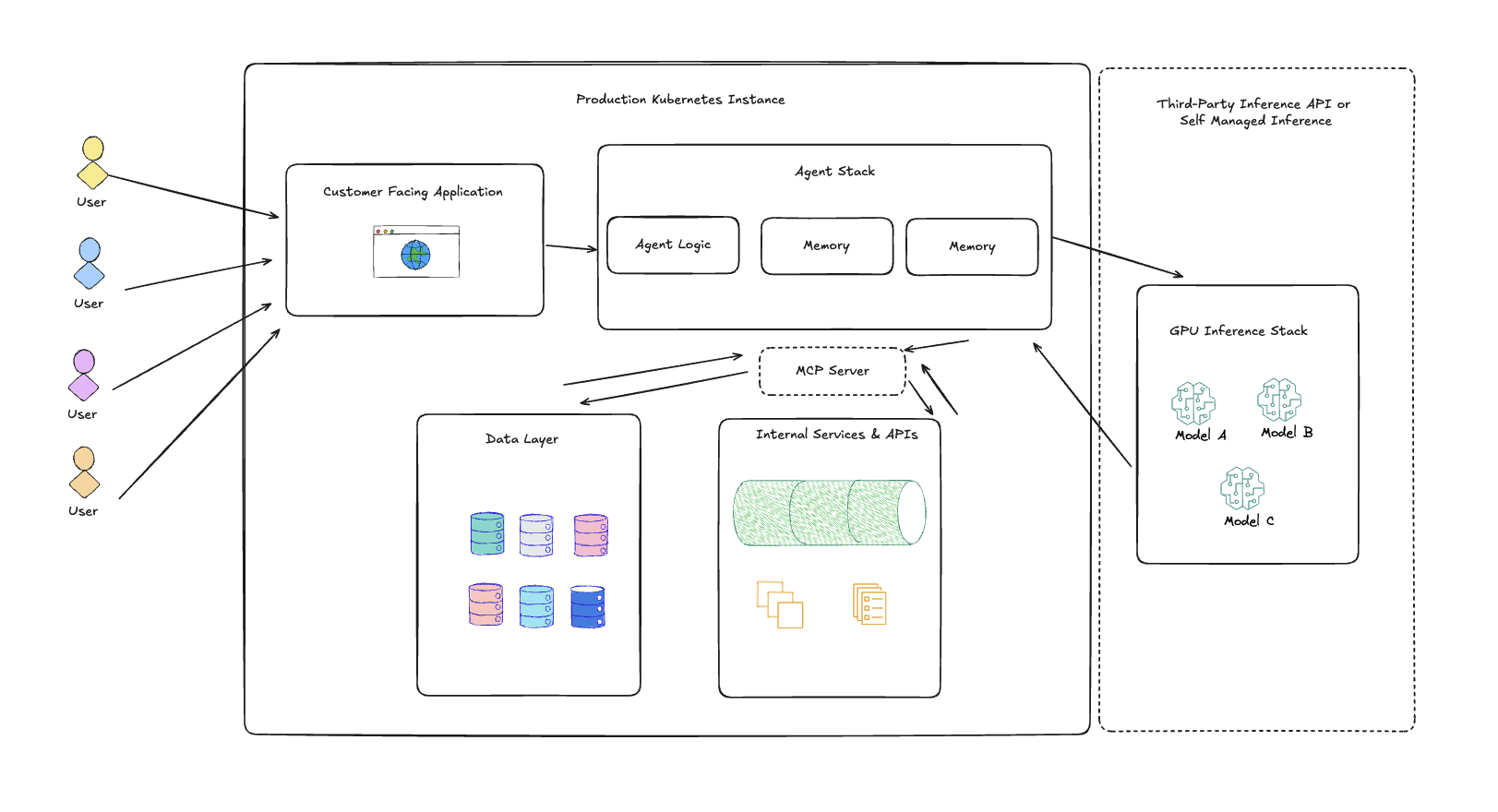

AI Agents bring a new architecture which can increase the attack surface for enterprise applications:

- Perception Layer: Responsible for ingesting data.

- Reasoning Layer: Houses the Large Language Model (LLM) and its operational logic.

- Action Layer: Executes commands and interacts with external systems, such as APIs, databases, file systems, and SaaS applications. Model Context Protocol (MCP) is a standard method of accessing data and other resources by the agent.

- Memory/Context Layer: Stores conversation and state, allowing the agent to maintain context and learn over time.

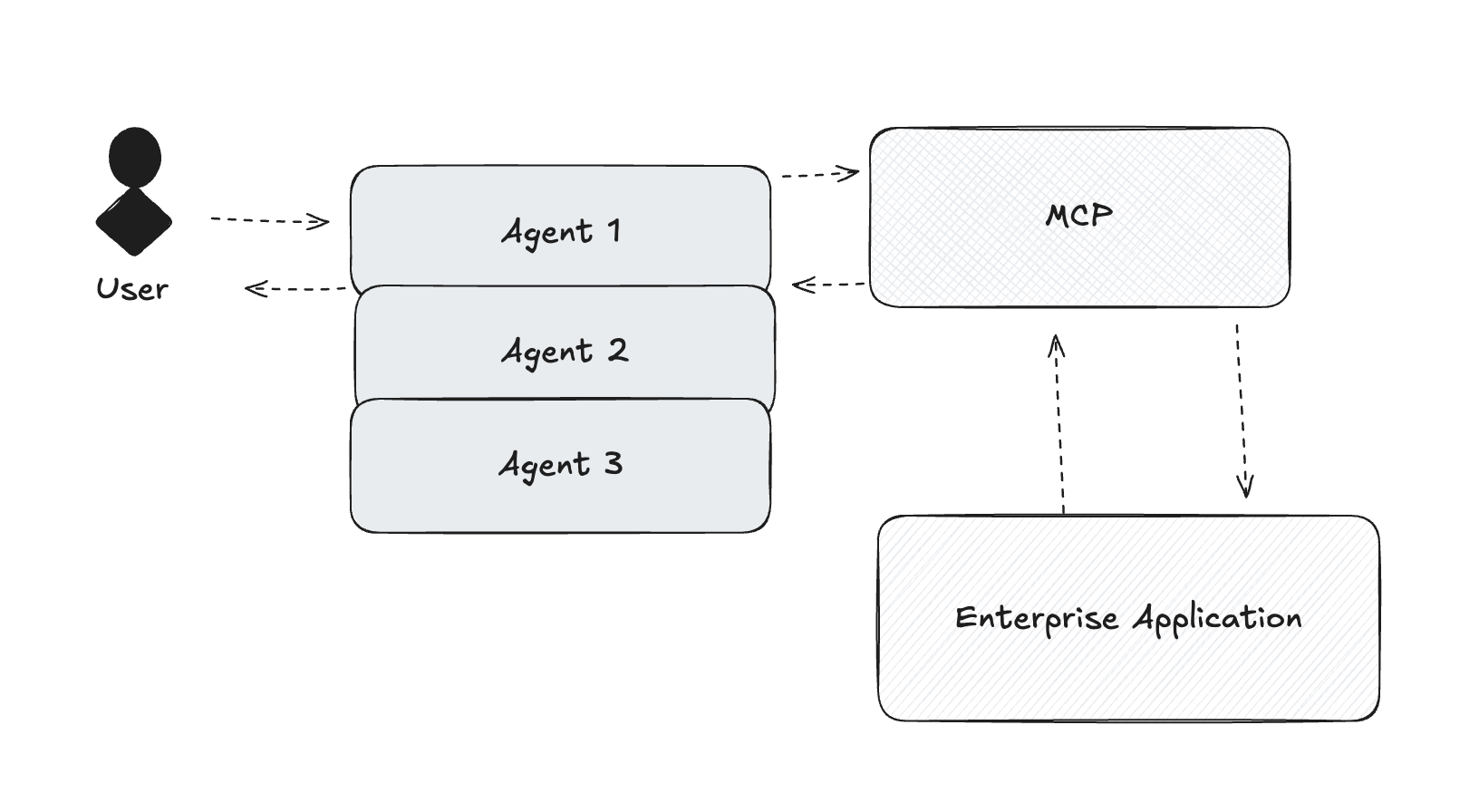

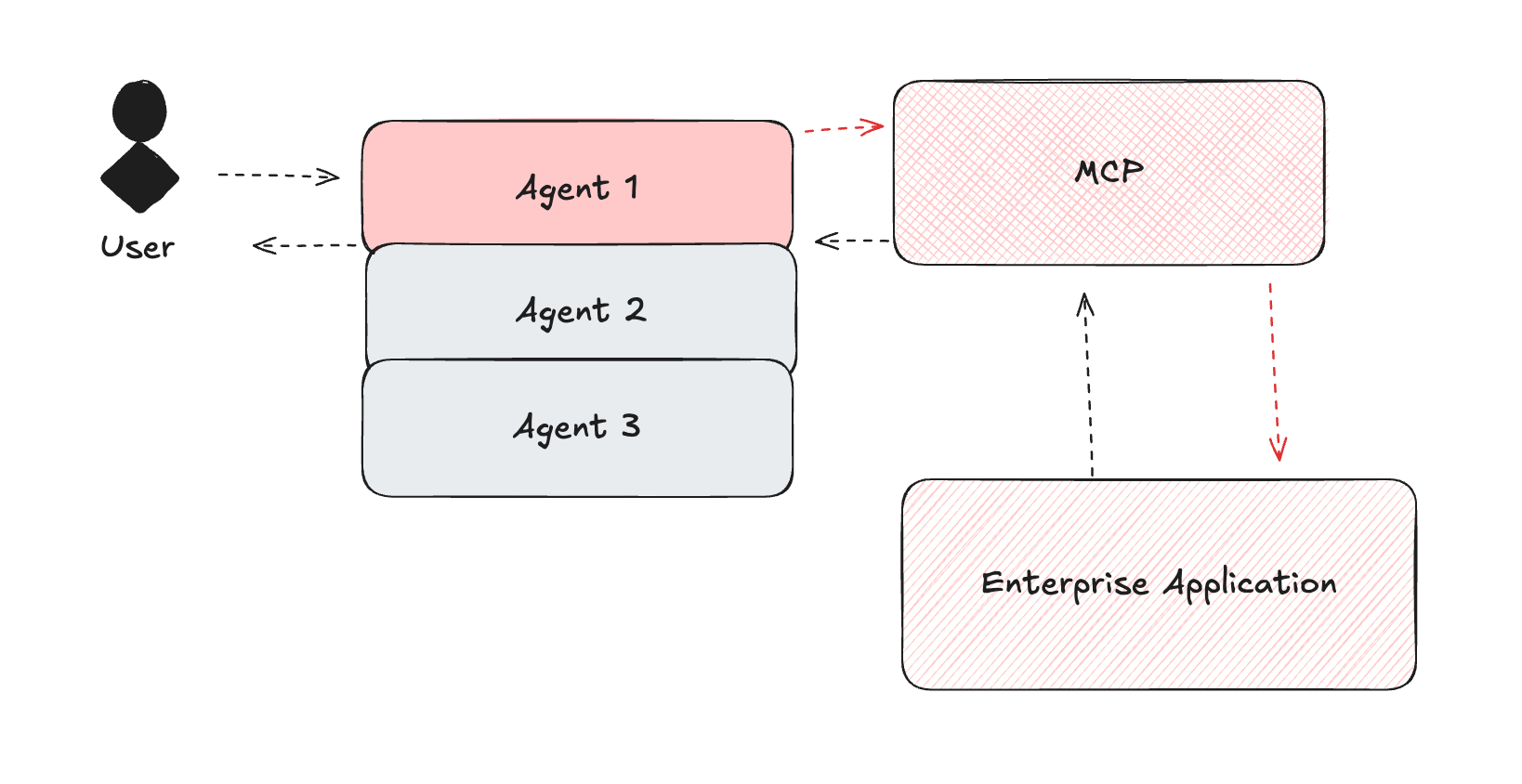

Many advanced deployments also involve Multi-Agent Systems, where multiple agents collaborate and coordinate to achieve complex goals.

While their autonomy promises efficiency and economic potential, Agentic AI introduces a fundamentally new and expanded threat surface. Traditional security paradigms, which rely on clearly defined trust boundaries and network perimeters, are often insufficient because agentic systems frequently operate with a unified authentication context, effectively "collapsing security boundaries" across multiple platforms. This creates a dynamic, hard-to-predict attack surface.

Modern applications built as distributed microservices and deployed across dynamic infrastructure, often powered by autonomous AI agents, mean the runtime is no longer a clearly defined system but a sprawling, ephemeral execution layer shared by multiple tenants, containers, and workloads. This means runtime vulnerabilities can provide unrestricted access to the host and every other workload on the system, representing a fundamental breakdown in isolation.

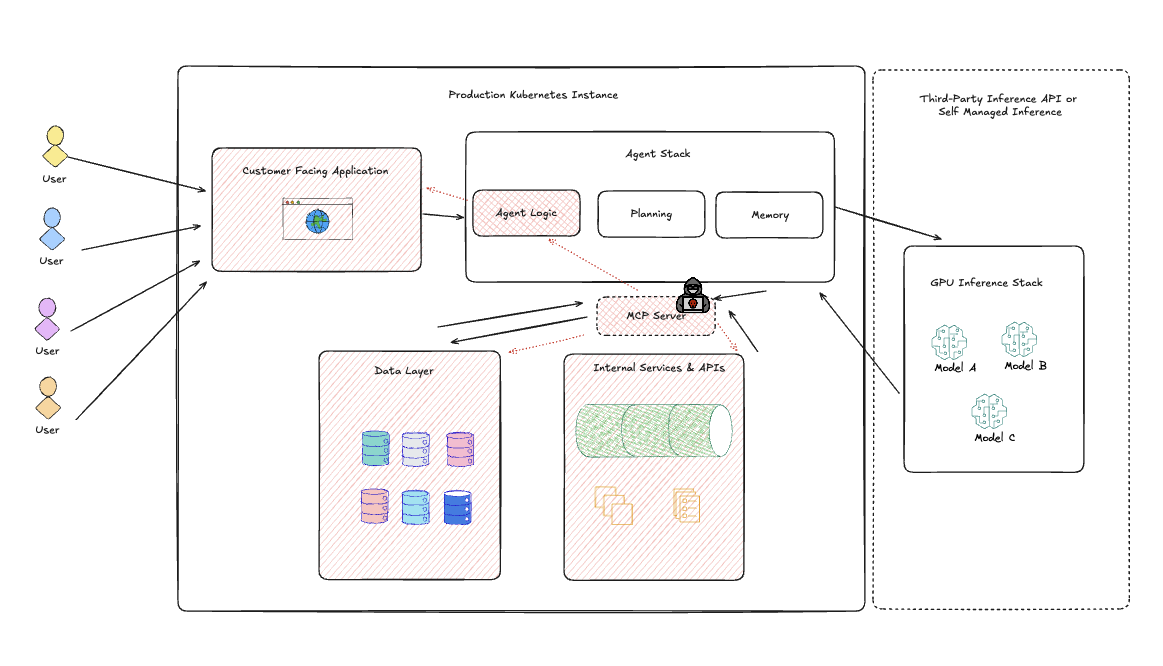

Without proper safeguards, even a single compromised component or a misconfigured interaction can lead to cascading failures or unauthorized actions across an entire enterprise. Key security vulnerabilities highlighted by recent cybersecurity events, and best addressed through true isolation of components, include:

- Prompt Injection and Agent Hijacking: Attackers embed malicious instructions within data that an AI agent legitimately ingests. Because the agent lacks clear separation between trusted internal instructions and untrusted external data, it can be tricked into executing harmful commands, leading to remote code execution, or mass data exfiltration.

- Tool Misuse and Malicious Code Injection: Agents can be coerced into misusing external tools (like file systems or APIs) or executing injected malicious code through deceptive prompts. This is particularly dangerous if the agent operates with elevated privileges, creating a direct path for privilege escalation.

- MCP Exploits: When an AI agent operates across multiple platforms with a standardized interface such as MCP Servers and Clients, it allows attackers to propagate access to all connected systems from a single point of compromise and serves as a starting point to move laterally across the environment and escalate privileges.

- Supply Chain Attacks: The supply chain for agentic AI includes pre-trained models, datasets, and software frameworks and libraries. Attackers can introduce malicious components that poison agents upon import or alter an agent’s code before deployment, leading to cascading attacks.

- Runtime Vulnerabilities: Traditional detection-based security often fails because attackers bypass rules by chaining subtle behaviors or abusing valid credentials. Without runtime hardening, defenders are forced to respond to symptoms instead of eliminating the root causes. AI workloads introduce new risks as agents behave autonomously, generate dynamic code, hold credentials, and interact with internal systems, becoming trusted attackers when compromised. This is exacerbated at the hardware layer, where GPUs are often shared, leading to side-channel leakage, memory snooping, and unauthorized execution pathways.

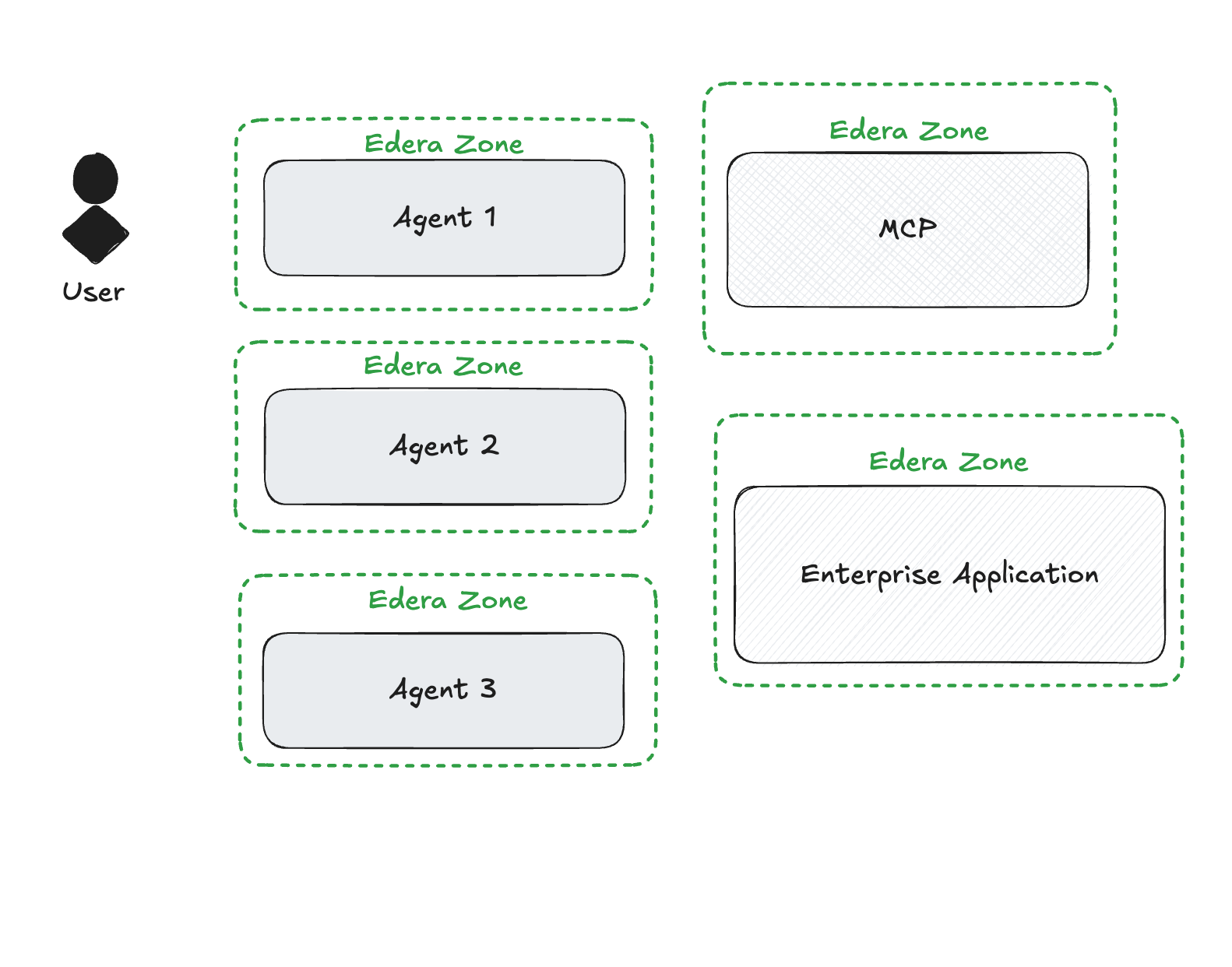

The critical need for a new security foundation is clear: security embedded in the runtime itself, where execution can be constrained, not just observed. This is where Edera comes in. Edera reimagines container runtime by redesigning the core architecture from the hardware up, bridging the gap between how containers ship and how they should run.

A hardened runtime, as enabled by Edera, replaces reactive alert chasing with proper isolation boundaries, fundamentally preventing categories of attacks by disallowing the conditions that enable them. Edera helps by launching all containers within a lightweight micro VM, which fundamentally stops container escapes and associated attacks. This approach ensures:

- True Execution Isolation: Each workload runs in a sandboxed zone with no implicit access to networks, APIs, or peer containers. This isolation is enforced by deeply constraining the process environment, blocking shared memory, open device access, and protecting all containers sharing the same host with the agent.

- Attack Surface Minimization: The runtime significantly reduces what code can do by default, preventing unscoped syscalls, removing unnecessary privileges, and eliminating host-level visibility. It provides only what the workload needs to function, making privilege escalation and resource abuse structurally unlikely.

These behaviors align with security hardening benchmarks and are enforced continuously by the runtime itself, without relying on static policies or dynamic rule evaluation. For AI and GPU-driven jobs, a hardened runtime tightly bounds every workload, restricting what they can see and touch, and explicitly authorizing memory regions, device interfaces, and interprocess communications. Nothing is assumed to be safe without verification. The hardened runtime is the new security boundary, where trust must be evaluated and enforced.

The business value of implementing true component isolation with a hardened runtime solution like Edera is clear and compelling:

- Ensured Data Privacy and Security in Multi-Tenant Deployments: In complex, multi-tenant or multi-agent environments where runtime is a shared execution layer, isolation prevents a localized breach from escalating into a systemic compromise. It transforms your agentic AI deployments from potential liabilities into resilient, trustworthy assets. By applying a hardened runtime at the granular component level, every interaction is treated as untrusted and explicitly authorized, effectively containing the blast radius of any potential attack. This is crucial as AI agents can gain access to even the most secure parts of a network, and without isolation, a single runtime weakness can give attackers full control.

- Accelerated Deployment Without Fear of Data Breaches: You can deploy Agentic solutions faster and with confidence, knowing that foundational security is built-in, not bolted on. Moving beyond superficial "guardrails" to deep-seated architectural controls avoids costly retrofitting and potential breaches down the line. This proactive, defense-in-depth approach, spanning the entire agent lifecycle, reduces the burden on security and platform teams by removing the possibility of entire classes of attacks. It allows organizations to drive safe AI innovation and avoid the dangerous paradox of prioritizing rapid adoption over robust security.

By adopting a foundational strategy of architectural isolation, especially with a hardened runtime solution that enables true execution isolation, you can confidently harness the immense power of Agentic AI, transforming it into a secure and compliant asset that propels your business forward.

-3.avif)